深度学习补充

- 理解卷积神经网络

- 使用数据增强来降低过拟合

- 使用预训练的卷积神经网络进行特征提取

- 微调预训练的卷积神经网络

- 将卷积神经网络学习到的内容以及如何做出分类决策可视化

理解卷积神经网络

机器学习通用工作流程

- 学会去定义所面对的问题

- 选择衡量成功的指标

- 确定评估方法;数据量大可以使用留出验证集;验证样本量太少,无法确保可靠性,使用K折交叉验证;数量少,模型评估又需要非常准确可以使用重复的K折验证.

- 准备数据,(1)数据格式张量化(2)取值应该都在比较小的值范围之间(3)不同的特征具有不同的取值范围,数据就应该标准化。(4)对于小数据可能需要做特征工程

- 一般二分类使用 sigmoid,损失使用binary_csorssentropy;多分类,单标签使用softmax,损失函数用categorical_csorssentropy;多分类,多标签使用sigmoid,损失函数用binary_csorssentropy;回归到任意值,最后一层激活无,损失函数使用mse;回归到0-1的范围内的值,最后一层使用sigmoid,损失函数使用mse或binary_csorssentropy

- 扩大模型规模,开发过拟合的模型。添加更多的层,让每一层变得更大,训练更多的轮次.监控指标,如果性能开始下降,那么就出现了过拟合。

- 模型正则化和调整超参数,添加dropout,尝试不用的架构,添加L1或者L2正则化,尝试不同的超参数(每层的单元个数或优化器的学习率);反复做特征工程,添加新特征或者删除没有信息量的特征

简介

1 | from keras import models |

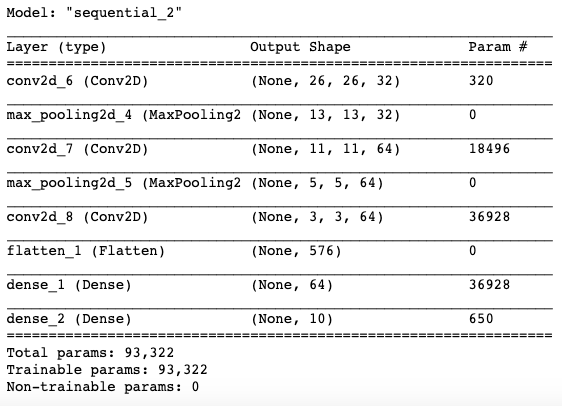

宽度和高度两个维度尺寸随着网络加深而变小,通道数由第一个参数控制(32或64).最后一层相当于10个分类。输出10个的softmax激活

1 | from keras.datasets import mnist |

猫狗分类

分为训练,验证和测试

1 | import os, shutil |

训练集,验证集200,测试集为6:3:1

定义神经网络

1 | from keras import layers |

配置模型

1 | from keras import optimizers |

数据预处理

1 | from keras.preprocessing.image import ImageDataGenerator |

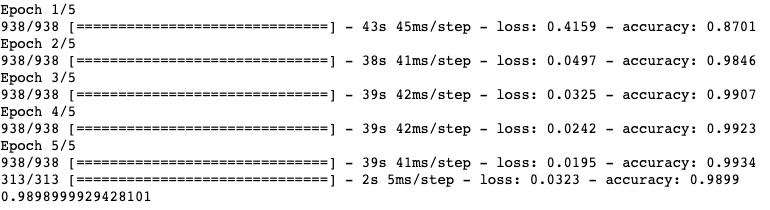

训练模型

1 | history = model.fit_generator( |

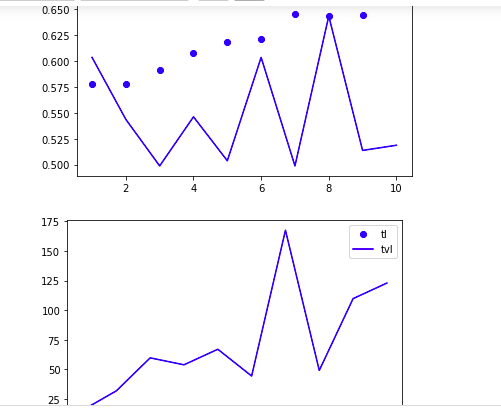

衡量数据指标

1 | import matplotlib.pyplot as plt |

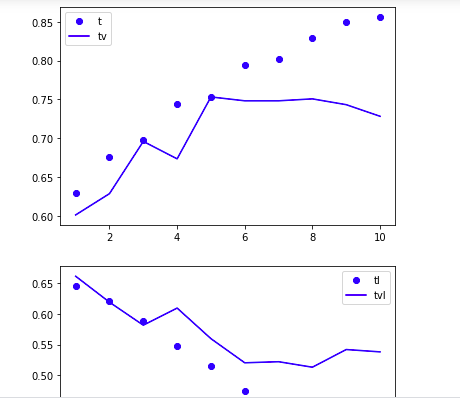

数据增强

使用dropout和数据增强器

1 | model = models.Sequential() |

1 | train_datagen = ImageDataGenerator( |

1 | history = model.fit( |